Neural Networks in FixPix

The following are all the neural network components currently supported by FixPix (as plugins

that are downloadable from within FixPix after it is installed).

Generative AI - Instruct Pix2Pix

What was seen as science fiction only a short time ago was made very real by the abilities of generative AI

that first brought us DALL-E, then ChatGPT and all that came after them. Luckily, the open source developments

also created their versions of this new technology and a great example is Instruct Pix2Pix, which lets you

write in plain English what you want to change in a picture.

Some examples of Instruct Pix2Pix on the top left image, created by the FixPix plugin. The instructions were simple, like:

"Give her a ponytail", "Make her a bronze statue", "Make her older", "Make her a cubist painting" etc.

Experimenting with Instruct Pix2Pix is fun. The example below shows

results of prompting to change hairstyle and age, but you can give

any instructions and experiment on various images to see what works best.

InstructPix2Pix was introduced in late 2022 in the paper "InstructPix2Pix: Learning to Follow Image Editing Instructions" by Tim Brooks, Aleksander Holynski, Alexei A. Efros.

LaMa Inpainting

Image inpainting is a method of letting a program intelligently try and "guess"

what pixels should exist behind an occluded part of the image. As with many other

fields, neural networks have been successfuly used to acheive this task as well.

FixPix has a plugin for LaMa Inpainting, a successful neural net that achieves

impressive results:

- Choose an image to inpaint

|

- Paint over the areas you wish to hide

|

- Lama Inpaint attempts to restore the hidden parts.

|

Inpainting also works on paintings. As an example, here it is used on Edward Hopper's painting "Morning Sun"

to remove the woman (just in case the existing sense of solidute and alienation in the original isn't quite enough)

LaMa Inpainting was introduced in late 2021 in the paper "Resolution-robust Large Mask Inpainting with Fourier Convolutions" by Roman Suvorov, Elizaveta Logacheva, Anton Mashikhin, Anastasia Remizova, Arsenii Ashukha, Aleksei Silvestrov, Naejin Kong, Harshith Goka, Kiwoong Park, Victor Lempitsky.

Generative AI - Stability Diffusion Inpainting & Outpainting

Lama Inpaint above is really good at quickly filling in an occluded area using a good guess

based on the surronding theme, but it doesn't know much about the internal logic

of an image, so if you occlude a part of a face for example, Lama inpaint will

not know how to restore it other than to fill it with some correct background texture and color.

Stable Diffusion InPaint however uses what it has learned by training on 2.3 billion images to reconstruct the missing parts

in a way that makes sense, which enables doing some cool things (images on the left are the original images and images on the right

are attempts to restore occluded areas):

Examples made with FixPix's Stable Diffusion inpainting:

Examples made with FixPix's Stable Diffusion outpainting:

U2-Net

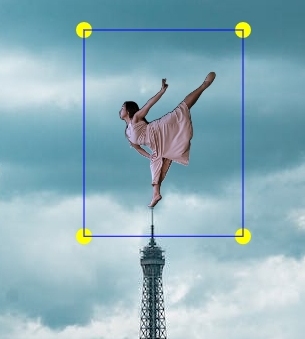

U2-Net is a neural network that can detect and separate the main object in a picture from its background.

This is done using a special design that allows the program to analyze the picture in great detail without

using too much computer memory.

One of the things you can do with U2-Net is to create sketches from photos, as illustrated in the above image.

With FixPix you can also use it to remove the background of a picture and create a silhouette of the foreground image:

|

After you remove backgrounds from images, you can use the Montage tool in FixPix to place

them on top of other backgrounds

|

|

The main contributors to U

2-Net are Xuebin Qin, Zichen Zhang, Chenyang Huang, Masood Dehghan, Osmar R.

Zaiane and Martin Jagersand. They developed the model and published their paper "U

2-Net: Going

Deeper with Nested U-Structure for Salient Object Detection" in Pattern Recognition in 2020.

AnimeGAN2

AnimeGANv2 is an improved version of AnimeGAN, for transforming a photo to have Anime style. It was developed by Asher Chan.

It uses a type of artificial intelligence called a GAN (Generative Adversarial Network) to enable the program to analyze the photo and create a new image that looks like it was drawn

in an anime style.

GFPGAN

GFPGAN is a neural network that uses advanced techniques to restore old or low-quality images of faces. It

does this by using information from a large collection of high-quality face images to fill in missing

details and improve the overall appearance of the restored image.

Above are some samples applied to get higher resolution of existing photographs using FixPix's GFPGAN plugin.

The images displayed are (from left to right): A young Albert Einstein, zoom in on David Bowie's hair and mouth, Charlie Chaplin

as boy.

The main contributors to the project are Xintao Wang, Yu Li, Honglun Zhang, and Ying Shan.

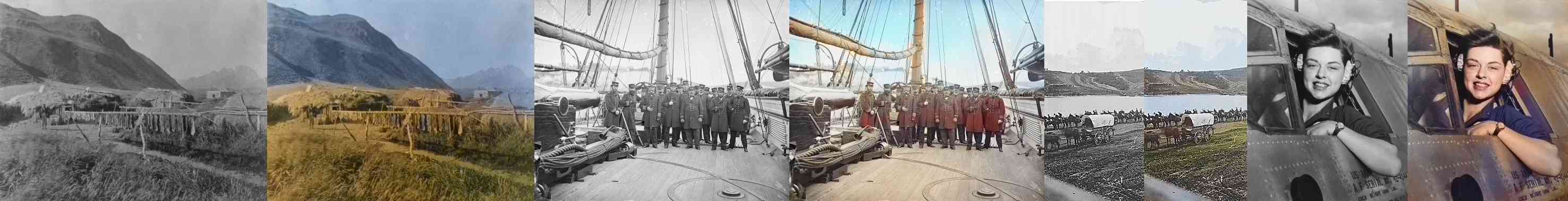

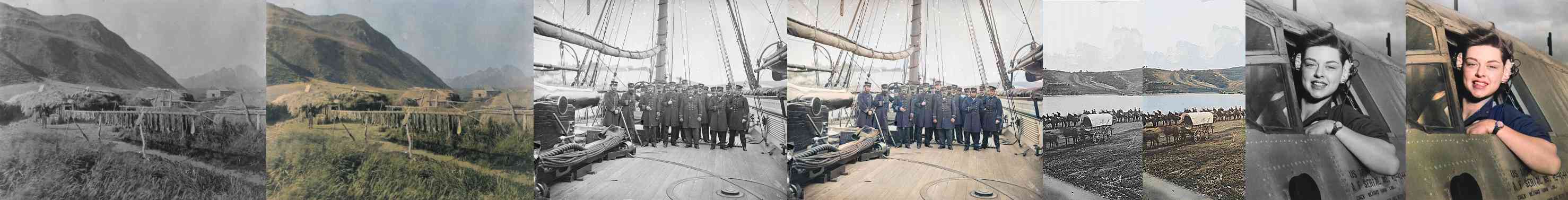

Colorful Image Colorization

Image colorization is the process of adding color to black and white or grayscale images.

In 2016, Richard Zhang, Phillip Isola, and Alexei A. Efros published a paper titled "Colorful Image Colorization" in which they presented a CNN for colorizing gray images. They trained the network with 1.3

million images.

DeOldify

DeOldify by Jason Antic is an image colorization method which has improved upon the original "Colorful

Image Colorization" method and provides two separate machine learning models for colorizing a grayscale

image.

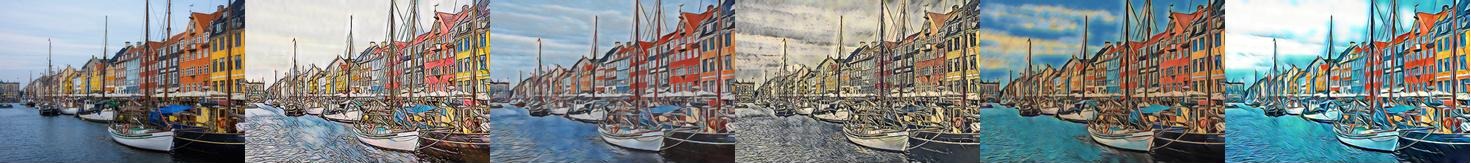

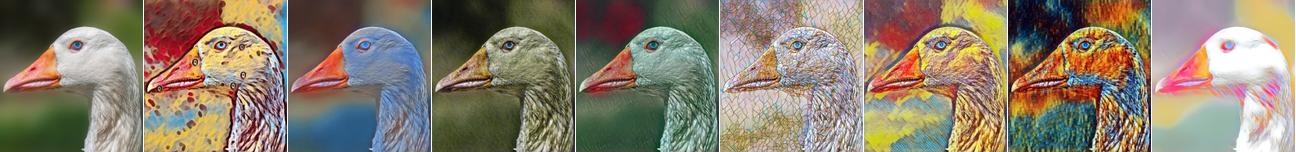

AdaAttN

AdaAttN is a tool that enables adding artistic styles to images. It does this by paying attention to the details in both the original image and the style image, and then combining them in a smart way. This results in high-quality images that have the artistic style of one image applied to the content of another. It's like having a computer artist that can paint your photos in the style of famous artists.

The FixPix AdaAttN plugin enanbles you to select any image as a style to be used and change any image so that it looks like that style.

AdaAttN stands for Adaptive Attention Normalization and was proposed by Songhua Liu, Tianwei Lin, Dongliang He, Fu Li, Meiling Wang, Xin Li, Zhengxing Sun, Qian Li, and Errui Ding in their paper "AdaAttN: Revisit Attention Mechanism in Arbitrary Neural Style Transfer" published in 2021.

Fast Neural Style

Fast Neural Style is a technique that allows computers to quickly add artistic styles to images. The method is much faster than the original neural style transfer algorithm, which can take several minutes to produce a single stylized image. This model does a good job of transferring the "feel" and style to the target image, but unlike AdaAttN, a different model has to be trained for every new style. The FixPix plugin for Fast Neural Style comes prebundled with 10 different styles.

It was developed by Justin Johnson, Alexandre Alahi, and Li Fei-Fei and presented at ECCV 2016.

CartoonGAN

CartoonGAN is a deep learning model for transforming photos of real-world scenes into cartoon style images. It was developed by Yang Chen, Yu-Kun Lai, and Yong-Jin Liu and presented at CVPR 2018